Automating TELOS File Processing with N8N, Ollama, and Obsidian

Introduction

My goal for this project was to automate the processing of TELOS files using my Local Ollama LLM and N8N instance. The automation needed to:

- Read my TELOS file.

- Process the content through custom AI prompts.

- Save the processed results back into my Obsidian vault for long-term reference.

This way, instead of manually moving files around and pasting content, I can focus on the output: insights and summaries I can use for personal growth and cybersecurity projects.

Why I Built This (And Why You Might Care)

Honestly? I was getting tired of running prompts manually in ChatGPT or Claude. It worked, but it felt like busywork that got in the way of what I really wanted: the insights. I wanted to spend my time reflecting and building, not copy-pasting text. Automating this workflow let me remove the friction so I could focus on growth and research.

Step 1: Preparing the File for Processing

The first step was to get my TELOS file into a location where N8N could easily process it.

- Within Windows, I created a scheduled task that ran a PowerShell script.

- The script copies the TELOS.md file from my working directory into my N8N share folder.

|

|

This ensured that once a week, the latest version of my TELOS file was available for processing. I chose weekly over daily triggers because honestly? Daily processing was overkill. Weekly gives me enough time to accumulate meaningful changes without drowning in automated outputs.

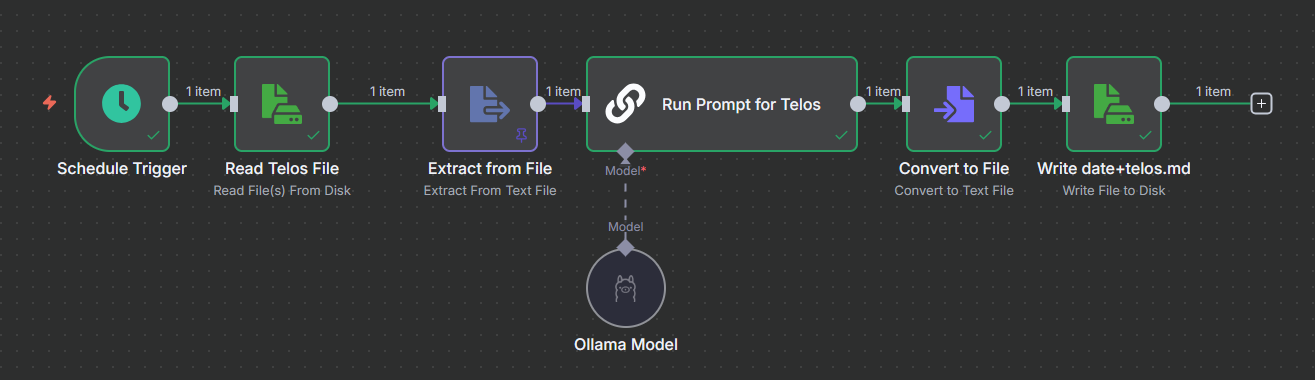

Step 2: Building the N8N Workflow

With the file in place, I created an N8N workflow that ties everything together. This runs on my main homelab server alongside my other docker containers and servers.

Workflow Breakdown

- Scheduled Trigger – Runs once a week to keep the process consistent.

- Read Binary File Node – Extracts the TELOS.md file contents from the text file.

- Extract From File Node - This node converts the text into an JSON data format for use with other nodes.

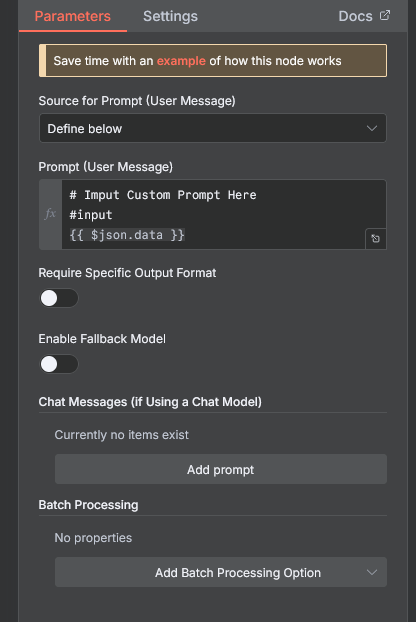

- AI Agent Node (Ollama) – Processes the file with a custom TELOS prompt.

The prompt templates came from the Fabric Project, which is designed for structured self-reflection and decision-making. Examples include summarizing goals, finding blindspots, or transforming raw notes into actionable insights.

For now, I am using the Fabric prompts as-is, but they are flexible enough to customize later. I also chose Ollama over cloud APIs because I do not want my personal reflection data leaving my network. Call it paranoia or just good practice, introspection feels like it should stay private.

- Convert To File Node - This converts the JSON data back into an binary format for saving.

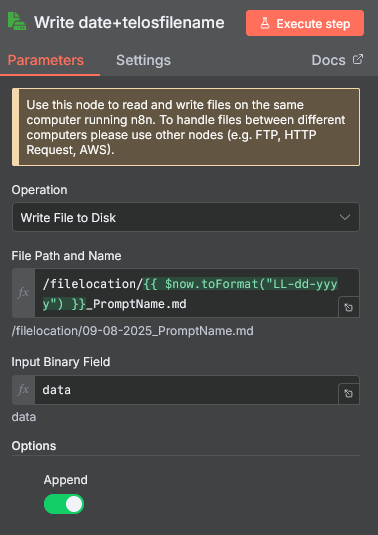

- Write Binary File Node – Saves the processed result with a filename pattern like:

|

|

Step 3: Returning Processed Files to Obsidian

I created another scheduled task that ran a second PowerShell script. The script copied the processed markdown files from N8N’s output folder into my Obsidian vault and cleaned up temporary files to prevent clutter.

|

|

Now, every week, my Obsidian vault automatically receives freshly processed TELOS entries without me touching anything.

Why This Matters

This project wasn’t just about moving files around. It solved a few real problems for me:

- Consistency – Weekly triggers ensure my TELOS framework is always up-to-date.

- Automation – No more copy/pasting between tools.

- Integration – Combines structured reflection (TELOS) with AI assistance (Ollama) and my note-taking system (Obsidian).

- Scalability – I can reuse this workflow with other prompts, notes, or even logs from my homelab.

What I Learned (The Hard Way)

This wasn’t smooth sailing. My first attempt worked fine, but as I added more prompts, it became complex. The biggest gotcha I had was that I pinned the data for testing, and it ended up utilizing the same prompt for several of the outputted files. Once I unpinned the data, my workflow began working as intended. Another gotcha was increasing the context window to fit the data into the model for processing. As this file gets bigger, it may need to be chunked for processing. Something to keep in mind if you’re planning to use this long term.

What I’d Do Differently Next Time

Before just creating nodes and linking them up as I go and just getting it working, I would create a plan of exactly what I wanted for the output to be. I would also create a way to manage the prompts better, such as in a database or flat file that can be referenced over and over again with versioning. Right now I just multiplied all the nodes for each prompt, so it looks like a mess.

Next Steps & Improvements

This is just version one. Some future ideas:

- Add notifications when a new processed file is ready.

- Expand beyond TELOS files: feed daily notes, logs, or captured research into the same pipeline.

- Add a Library of prompts for processing files

- Adding more prompt types from Fabric?

- Expanding to other types of files beyond TELOS?

Final Thoughts

Is this over-engineered for processing TELOS files? Maybe. Do I care? Not really. There’s something satisfying about automating the tedious parts so I can focus on what actually matters - the insights and analysis.

The workflow might look like a mess right now with all the duplicated nodes, but it works. Sometimes getting something functional is more important than making it pretty from the start. I can always clean it up later when I implement better prompt management.

If you’re thinking about building something similar, don’t overthink it. Start simple, get it working, then iterate. My setup isn’t perfect, but it’s already saving me time and helping me stay consistent with processing my TELOS files.